The two axes represent competing obligations; the good-evil axis tends to measure a character's concern for others, whereas the law-chaos axis measures the same character's concern for order. King Arthur might be considered Lawful Good, but Robin Hood would probably be seen as Chaotic Good. Conversely, a serial killer might best be described as Chaotic Evil, while a mafia hit man would be in the Lawful Evil area of the chart. Other axes have been created to represent other areas of moral concern, but the system depicted in the chart above is by far the most pervasive.

Moral alignment in tabletop gaming can be fairly arbitrary because it depends entirely on the interpretation of the GM. In classic AD&D rules, for instance, the character class of Paladin requires that a PC maintain a Lawful Good alignment; if player begins conducting the PC in a way that runs counter to that alignment, the GM has the option (and, some might argue, the responsibility) to rescind the PC's Paladin status, along with its attendant powers, and force the player to run the PC as a common fighter. What constitutes sufficient deviation from the PC's alignment, however, is completely up to the GM.

In computer RPGs, the GM is digital, which poses some interesting issues for games that incorporate moral alignment. Ambiguous and complex ethical decisions must ultimately be reduced to data that the game can process. Once a game establishes moral agency (see the previous post on this subject), there are two possible options for dealing with the consequences of a PC's actions.

The first option is to include some type of scale or continuum by which the game can track a PC's morality. Sometimes, this scale is purely internal; the game engine decides what is good or evil and rewards or punishes the PC accordingly -- new powers or closed-off missions. Other scales are external; the consequences of the PC's actions are meted out by NPCs who increasingly react to the PC as either a hero or a villain. A handful of games combine these two types of metrics. Please read Laura Parker's excellent Gamespot article, entitled "Black or White: Making Moral Choices in Video Games," for a more detailed account of morality systems in gaming.

The other method of creating morally rich gaming environments is simpler, but far more abstract. Some games create morally complex scenarios, but do not include any discernible consequence system beyond an NPC reaction protocol. If, for example, a PC steals from a store, he might affect the store owner's willingness to help him in the future, or perhaps get arrested by the police, but because morality is not one of the PC's measured attributes (like skill levels or hit points), future gameplay remains relatively unaffected -- especially if there are no witnesses.

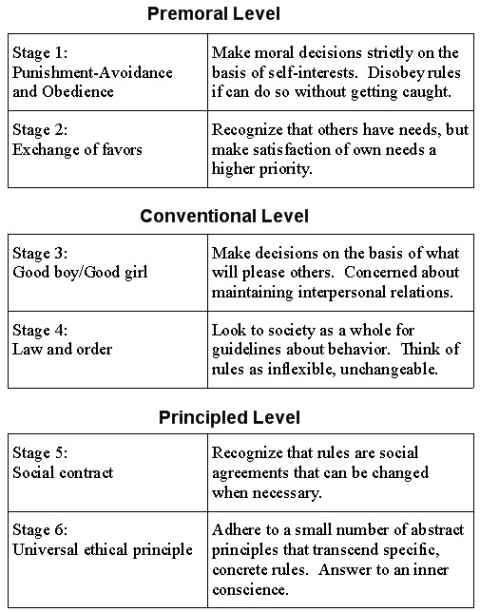

The core difference between these two approaches can be best understood through psychologist Lawrence Kohlberg's seminal work on the stages of moral development. In his 1958 dissertation, Kohlberg built on Jean Piaget's and John Dewey's work with children in order to systematically describe the process of an increasingly competent intellect "learning" morality:

|

| A summary of Kohlberg's stages |

Although Kohlberg's work is more complex and controversial than the scope of this study allows (see this excerpt from Theories of Development for a more in-depth treatment of Kohlberg's theories and the criticisms thereof), it forms a convenient framework for understanding the role of moral metrics in computer RPGs.

Consider Kohlberg's iconic dilemma:

Heinz Steals the Drug

In Europe, a woman was near death from a special kind of cancer. There was one drug that the doctors thought might save her. It was a form of radium that a druggist in the same town had recently discovered. The drug was expensive to make, but the druggist was charging ten times what the drug cost him to make. He paid $200 for the radium and charged $2,000 for a small dose of the drug. The sick woman's husband, Heinz, went to everyone he knew to borrow the money, but he could only get together about $ 1,000 which is half of what it cost. He told the druggist that his wife was dying and asked him to sell it cheaper or let him pay later. But the druggist said: "No, I discovered the drug and I'm going to make money from it." So Heinz got desperate and broke into the man's store to steal the drug-for his wife. Should the husband have done that? (Kohlberg, 1963, p. 19)Whether one answers the question "yes" or "no" is irrelevant; what matters is the reason for the decision. A Stage 1 thinker, who tends to think only of immediate benefit or punishment, might say "yes" because Heinz will be sad if his wife dies, or "no" because Heinz will go to jail if he gets caught. Conversely, a Stage 4 thinker, who sees issues of justice in terms of social norms, might "yes" because the druggist is unfairly gouging Heinz when he obviously can't afford it, or "no" because breaking the law is never right, even in these circumstances.

Dichotomous morality engines, such as those found in Knights of the Old Republic (Light Side/Dark Side) or InFamous (Karma), label the PC "good" or "evil" and hand out the appropriate consequences, thereby encouraging the player to remain at the premoral stages (1 and 2). The player tends to make decisions based on creating optimal gameplay conditions for himself -- gaining greater powers, avoiding penalties, unlocking achievements, and the like. This is not to say that moral agency is impossible in these games; rather, the game mechanics create a Skinnerian system of positive and negative behavior modification that neither invites nor prevents moral agency. NPC disposition systems offer greater freedom, but they only function properly when NPCs are aware of the PC's actions, and even then they really invite only a Stage 3 kind of thinking.

Imagine the Heinz dilemma as a decision point in a computer RPG. If the game employs a simple, internal "red/blue" dichotomy, then one choice will be considered good and the other evil. Perhaps breaking the law is coded as an evil action, so Heinz's "Karma Meter" will slide toward the red if he chooses to save his wife. Instead of considering how stealing the medicine impacts his PC's potentially complex ethical code, the player may well be placed in the ironic position of doing something he considers wrong in order to maintain his PC's "good" status.

Games that limit their moral metrics to NPC disposition and delayed consequence (such as The Witcher and Skyrim) allow players greater agency. If we relocate Heinz from Europe to Skyrim, we create an in-game dilemma that invites higher-stage thinking. Let's say that Heinz is a PC with a high enough Sneak skill that he can expect to steal the drug without being detected by either the druggist or the guards; without a dichotomous meter or NPC reactions to consider, the player is more free to base his decision on Stage 3 thinking or above. Forcing the player to choose between loyalty to one's spouse over allegiance to the law without inordinate concern for the impact on gameplay encourages greater moral agency in the player.

As Parker suggests, future games may have morality engines sophisticated enough to engender moral agency on Stages 5 or 6 of Kohlberg's scale. Until then, it seems that less is more in this area. Games that create morally complex scenarios but refrain from evaluating the player's decisions allow the development of rich and multifaceted PCs who cannot be easily reduced to "good guy/bad guy" status -- in other words, PCs more like us.

For further reading, check out these articles:

No comments:

Post a Comment